Home > Implementation for Educators Blog

Just for fun, I asked ChatGPT how many Artificial Intelligence (AI) tools exist that support education. I recognize that this may not be the best prompt, but just humor me. The results indicated that it was difficult to narrow down an exact number because tools are emerging daily. Let that sink in for a moment. A rough estimate from ChatGPT indicated tens of thousands of platforms across various industries, with about 50 that could be curated as “popular tools.” Rapid growth occurred between 2022 and 2023, driven by the introduction of generative AI (National Education Association, 2024). With all of that noise, educators face increasing pressure to adopt AI platforms quickly, risking a repeat of a familiar pattern: innovation without implementation. It would be better if educational agencies reframed the focus from AI as an innovation to asking themselves, “How do we use AI to strengthen systems and efficiencies, rather than creating more initiatives and fragmentation?”

AI Is Not the Innovation

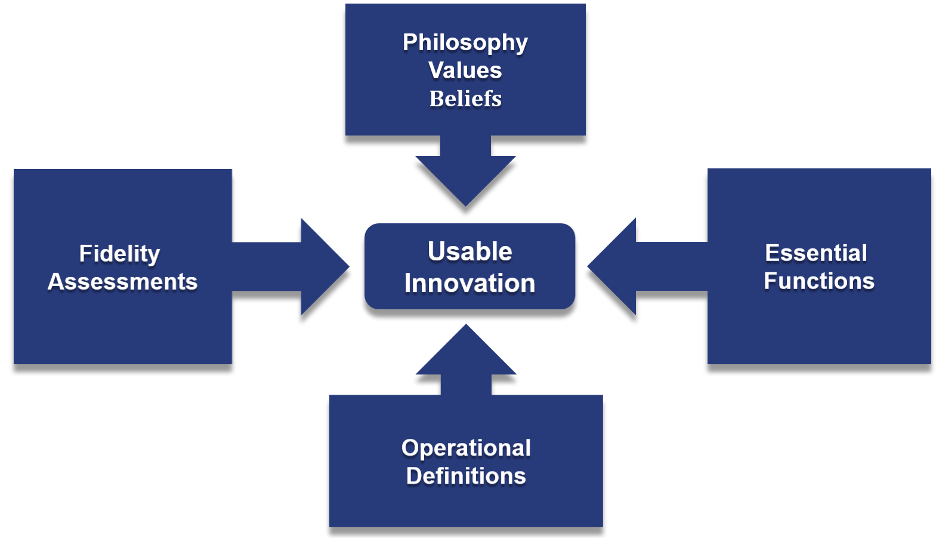

Let’s be very clear—AI is a tool, not the innovation. And I mean no disrespect to our friends ChatGPT, Claude, and Gemini, but using these AI platforms supports our efficiency by recognizing patterns, scanning crazy amounts of information and data to provide summaries, and, well, let’s be honest, writing my emails at times. AI platforms do not have the four basic components of a usable innovation, program, or practice. To examine this concept further, review the four basic components of a usable innovation.

Figure 1. Usable Innovation Diagram

From: National Implementation Research Network. (2023). Usable innovations overview. University of North Carolina at Chapel Hill, Frank Porter Graham Child Development Institute. https://implementation.fpg.unc.edu/resource/usable-innovations-overview/

- Clear description of the innovation, including philosophy, values, and beliefs

- Clear essential functions and key components

- Operational definitions of essential functions

- Evidence of effectiveness through fidelity measures

Most AI platforms lack all of the core components for a usable innovation or evidence-based practice. We need to stop thinking about AI as something we implement and instead view it as a tool to improve implementation efficiency.

Additionally, even if you view AI as a tool rather than an innovation, common misconceptions persist about its use. For example, we often hear the following:

- AI will “fix” capacity problems. AI tools can be used to draft processes, develop meeting agenda templates, and identify patterns in implementation data. It does not do the hard work of addressing adaptive challenges, putting processes and policies into action, and ensuring knowledge transition occurs.

- AI replaces the functions of staff. AI cannot make decisions for you. Trust me, I had a long conversation with ChatGPT on this. As leaders, we need to consider not only the data analysis we may use AI to support, but also the emotional intelligence to understand the impact of our decisions. We have also seen a growing trend in AI companies trying to replace coaches. While this is a much larger topic, generative AI supports teachers in answering questions and developing lesson plans or materials; it does not support teachers in internalizing or understanding the art of their work, or in shifting their instructional approach.

- AI can replace the work of our Implementation Team. In a recent article, Jayshree Seth and Amy C. Edmondson (2026) examine how AI can affect a team’s psychological safety. Think about how much time you’ve spent building trust and transparency within your implementation team, and how quickly you start questioning each other’s work due to experiences with less-than-quality work using AI. The authors dive into the idea that “the same AI tools that promise to enhance productivity can create predictable patterns of team dysfunction that mirror classic organizational behavior problems.” When thinking about how implementation teams function, by embedding AI without clarity of use, you may unintentionally accelerate confusion, reinforce biases, create dependency, and automate poor decision-making.

In short, AI supports how work gets done, not whether it gets done well.

Revisiting the Success Formula in an AI Context

Figure 2. Formula for Implementation Success

From: National Implementation Research Network. (2023). Active implementation overview. University of North Carolina at Chapel Hill, Frank Porter Graham Child Development Institute. https://implementation.fpg.unc.edu/resource/1429/

When considering the components of the formula, there is room to use AI to support implementation. Think about identifying an effective practice. Although AI is not a usable innovation, it has access to information that could support your selection of an evidence-based practice or program—literature reviews on promising practices, evaluation results on a specific program, or reports on a new curriculum. It can quickly find and provide that information. However team members will want to verify the accuracy of the information. To address effective implementation, AI could be used to draft data discussion protocols, written procedures for selection and alignment, or even develop a training plan template. To provide context, discuss how it could make data more visible to support improvement cycles by developing visualizations or a quick survey on meeting effectiveness.

One resource that can serve as a practical tool to strengthen systems work around AI is Innovate AI’s framework. Rather than positioning AI as a set of tools to adopt, the framework serves as a systems improvement tool. It prompts districts to examine the conditions that enable effective use, student agency, staff empowerment, and leader condition-setting, and to gather structured data to assess progress across those domains. In that way, it operates much like an implementation capacity tool: clarifying roles, surfacing gaps, and creating feedback loops for continuous improvement. For K–12 leaders, this makes AI less about experimentation and more about intentional system design, aligning innovation with infrastructure, leadership behaviors, and measurable outcomes.

When considering the Implementation Success Formula, AI does not replace clear, usable practices or programs. It cannot address your adaptive challenges when building competency or addressing organizational drivers, nor does it know your context as you do. Also, when the implementation context is not intentionally addressed, AI can magnify existing inconsistencies and system fragmentation. Key questions SISEP encourages you to ask yourselves when beginning to use AI in your implementation work:

- Who benefits from this tool?

- Who may be excluded or harmed?

- How are decisions being made, and what role does AI play in our process?

- What processes will be impacted by using AI, and what will need to change?

As you work through these questions, think about your agency’s culture and understanding of AI. What policies and processes are in place? Are you able to influence policy, using implementation practices? Take your context into consideration as you embed the use of AI into your implementation efforts and systems.

Looking Ahead: Implementation Still Requires Humans

We recognize that the speed of implementation needs to be addressed. Learning and applying the Active Implementation Frameworks effectively can take years, and AI can accelerate this work, but we want to encourage the use with caution. AI is not a usable innovation, nor does it replace trust, relationships, leadership, or learning that occurs within teaming and implementation. All of which are key components to systems work and organizational change.

References

Innovate AI. (n.d.). AI Innovation Index framework. https://innovate-ai.org/framework/

National Education Association. (2024, June 26). III. The current state of artificial intelligence in education. NEA. https://www.nea.org/resource-library/artificial-intelligence-education/iii-current-state-artificial-intelligence-education?

Seth, J., & Edmondson, A. C. (2026, February 4). How to foster psychological safety when AI erodes trust on your team. Harvard Business Review. https://hbr.org/2026/02/how-to-foster-psychological-safety-when-ai-erodes-trust-on-your-team?