|

We only add fidelity measures and reporting if:

-

We want to support teachers and staff in learning and using new instructional and intervention practices and programs.

-

We want to have some evidence that our training, professional development and coaching efforts are effective.

-

We want to make smart and efficient decisions about improvement strategies.

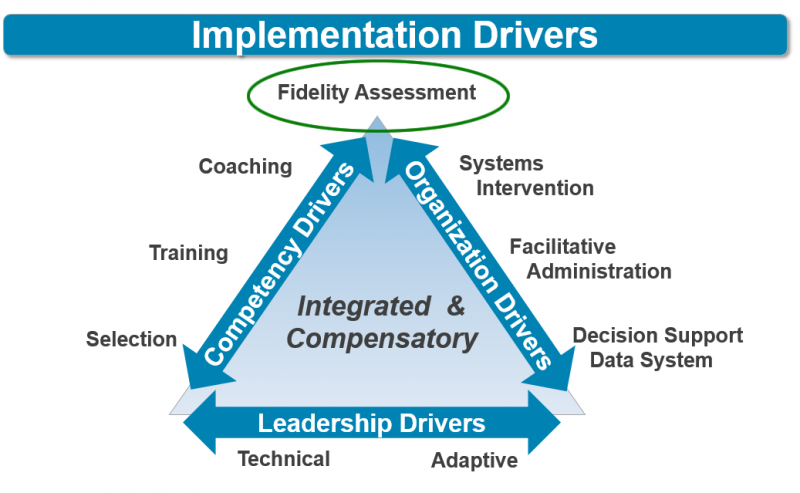

Fidelity assessments are system diagnostic tools.

If we are not getting the results we’d hoped for we need to know why. Is it because we are not doing what is required? Or is it because we’ve selected interventions, supports, and practices that are not right for our students? Without fidelity data to review along with our student outcome data, we resort to random acts of improvement. We may stop using programs and practices that actually are effective if teachers are supported in using them as intended. And then we spend a lot of time and money needlessly starting over. This reinforces a justifiable, “this too shall pass” perspective on the part of teachers and staff.

Fidelity assessments (and data) are not ways to reward, rank, or rate classroom teachers.

Fidelity is a way to determine if there is sufficient, effective support for teachers and staff who are expected to engage students with diverse needs through sound instructional practices to achieve positive outcomes. High quality instruction and interventions done with fidelity are in the best interests of every student and are everyone’s responsibility.

Getting Started

The program or practices you selected, based on student needs and best evidence, may come with a fidelity assessment process. When there is an existing fidelity assessment process you can create and adhere to a Fidelity Assessment Delivery Plan. That plan details the measurement processes, data sources, and frequency of observations, reports, and data reviews.

What if the program or practice we have selected does not provide a fidelity assessment process?

Getting your own process in place first requires clearly defining the program and identifying the core components that are most likely to improve student outcomes (see AI Module 6: Usable Intervention). Operationalizing these core components so that the program is doable, teachable, measureable, and repeatable is the next step. (see Practice Profile Planning Tool) . For selected core components you will need to identify measurable indicators, e.g. lesson plans, classroom observation data, and interviews with parents, teachers, and students (see AI Activity 7.2 Developing a Fidelity Assessment). Then you have to try it out with a few teachers and classrooms to see how well it works, make adjustments, and try again – Plan, Do, Study Act your way to a better process.

Getting Better

Take a deeper dive on developing a Fidelity Assessment with these resources on the Active Implementation Hub:

• Module 7: Fidelity Assessment

• Lesson 2: Usable Interventions

|